Installation Overview

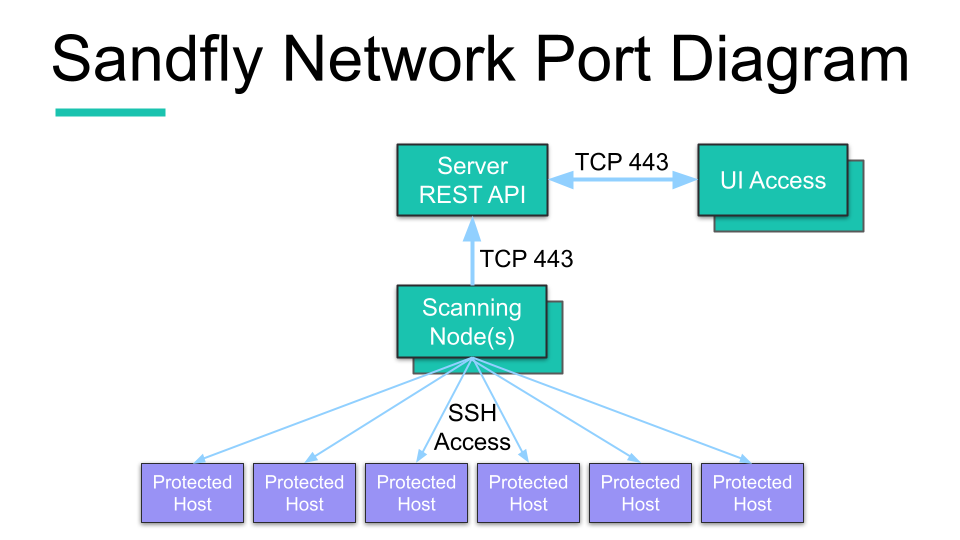

Architecturally, Sandfly uses a core server for the database, user interface and REST API. Scanning of remote systems for Sandfly is done by separate scanning nodes. Optionally, the database can be accessed remotely by the server so the customer can host their own distributed and fault tolerant database cluster off the server.

Single, Multiple and Jump Host Support for Nodes

Scanning nodes do the grunt work for contacting and checking hosts for problems. Nodes can be set up to run on isolated segments and communicate back to a single central server.

You can also run multiple nodes distributed across multiple networks. For instance, nodes can run at multiple cloud providers or inside multiple private networks while being controlled at one point.

Finally, nodes can be set up to make use of jump hosts to access isolated networks by first connecting to SSH choke points before connecting to the internal hosts.

The scanning nodes receive orders from the server about what systems to scan, and what problems to look for on the remote hosts with a random selection of sandflies. The nodes perform the required checks by pushing down their sandflies to do investigations and report results. Any suspicious activity found by a sandfly is reported back to the server for user alerting and further actions.

Sandfly High-Level Architecture with Single Node

During the installation we will set up the core server and scanning nodes. The server is comprised of a web server, a REST API and a PostgreSQL database. The nodes communicate to the server via the API and multi-threaded, high performance scanning engines manage sandfly deployments and process results.

Updated 4 months ago